How I plan content for LLM visibility

Follow these 3 layers to get your content to show up in ChatGPT

Didn’t see it at first — and honestly hesitated leaning into this angle — but since launching my product-led content (PLC) library offer earlier this year, an unanticipated outcome keeps showing up:

Product-educational content, aligned to the buyer’s journey and built to answer prompts people ask in LLMs, is helping my clients show up in ChatGPT and other AI tools.

That is:

LLMs recommend their SaaS tool when folks search for tools in their category

The content we’re putting out is driving traffic via ChatGPT to their site

They’re showing up in Google AI Overviews and LLM research results, getting linked and surfacing in front of buyers.

Now, if I were you, I’d be thinking: why should I care about LLM visibility at all?

Because:

89% of buyers are already using LLMs to research software

AI search traffic will overtake traditional SEO traffic by 2028

ChatGPT is among the top 5 traffic sources for traditional search

So saying I’m helping series A marketing teams show up in LLMs with product-led content is the flex I didn’t know I’d get to flex 💪

Feels like PLC libraries v2.0 is right around the corner *makes heart eyes*

Okay, enough talk. Let’s dig into the spicy bit:

One, plan decision-making content buyers are already asking LLMs

This is where I start really.

For context, a product-led content strategy rests on two broad content types:

Category + POV content for product-unaware readers

Product education + decision-making content for product-aware readers

And it’s this second type — product-featuring, decision-making content — that matches the exact questions buyers are already putting into LLMs:

Is tool A worth the investment?

How long does it take to implement tool X?

How do I prove the ROI of tool Y?

Answer these clearly in your product-led content, and you massively increase your odds of showing up when buyers search in LLMs.

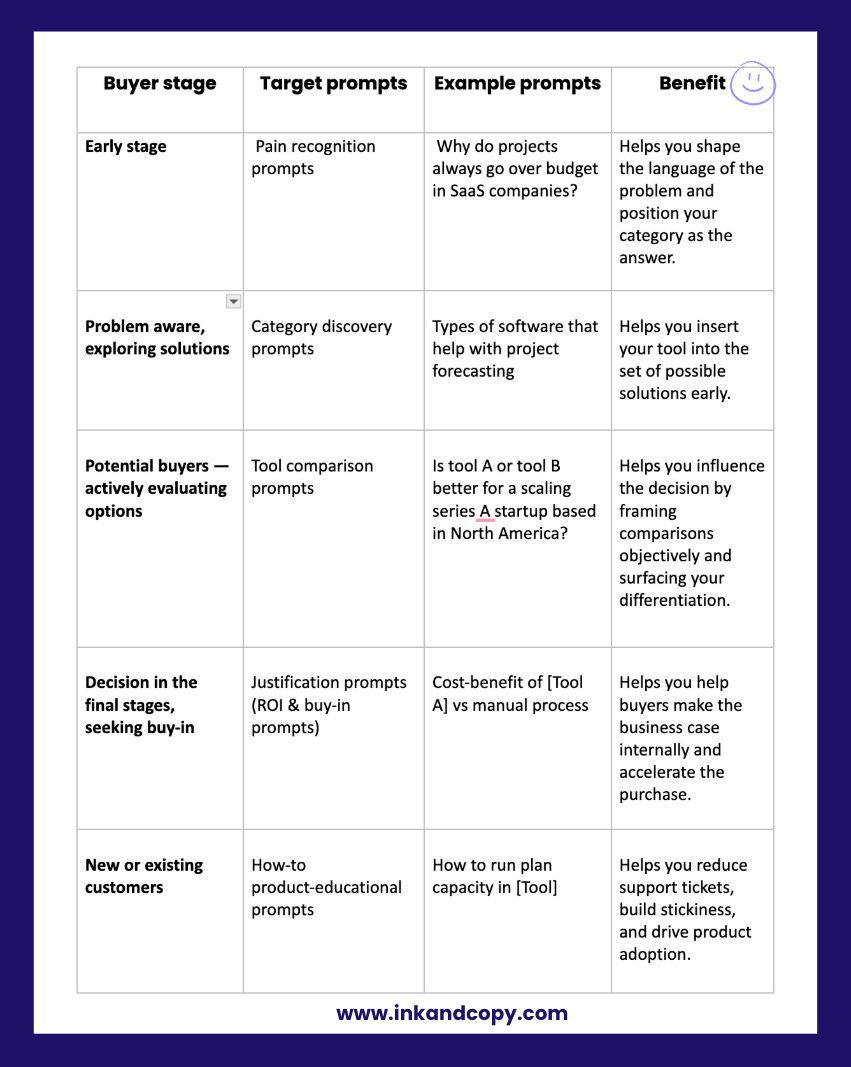

Two, plan content for prompts across the journey

Decision-making content is only one piece of the journey and LLMs don’t serve content in a funnel sequence — they serve answers to prompts.

So to fully build your LLM visibility:

Plan content for prompts across the buyer’s journey to train AI to pull you into conversations no matter where the buyer starts.

Practically, this means creating content for prompts at each stage: pain recognition → category discovery → comparison → justification.

And your strategy can go one step further: map content for new and paying customers too, with how-to product-educational prompts to drive adoption and retention.

Here’s how it looks mapped out:

Three, structure the content so it’s easy for LLMs to pick up

Prompt-to-content mapping is only the strategy layer of the equation.

The next layer is how you package your content because if it isn’t structured for LLMs to parse, it won’t matter how well it maps to prompts.

When I build PLC libraries, this is the layer I spend a lot of time on — turning strong insights into content LLMs can pick up, cite, and recommend.

Here’s what that looks like in practice:

Address one idea per block

LLMs lift in chunks. If you pack three ideas into one paragraph, they’ll get jumbled or dropped. Break sections into atomic, self-contained units — so each block stands alone if lifted.Build question–answer scaffolding

Make sure your answers are declarative, direct, and repeatable in one or two lines. Then expand. This increases the odds of the short version getting surfaced in LLMs, while the depth supports human readers.

Plan for consistent patterns

LLMs pick up clear patterns. So frame sections in a predictable order. For instance, Context → Evidence → Takeaway.Bake in variant phrasing

Buyers don’t all ask prompts the same way. Include synonymic phrasing within your structure (e.g., “ROI of [Tool]” and “Is [Tool] worth the investment?”) so your content maps to multiple prompt patterns without keyword stuffing.Keep decision sentences scannable

LLMs love sentences that read like direct conclusions: “For Series A startups, [Tool] is stronger than spreadsheets because…” These declarative judgments are exactly the kind of phrasing AI looks to echo back.

Prioritize clarity over cleverness

LLMs prefer clarity so you’ll want to balance brand voice (to engage readers with clarity). For instance, say “integrates with Trello and Slack” instead of “plays nicely with your tech stack.”

Put together, these three layers are what make a PLC library LLM-ready — not just more content, but content that shows up where buyers are searching.

Tomorrow, I’ll show you what that library actually looks like.

For now, you’ve got the strategy it’s built on — whether you plan to execute it in-house or work with me. (And yes, news on that front coming soon 👀).